My Company: Tachos High Performance Computing

Buy IBM Power. Really.

I have been rather silent lately because I’ve been hard at work on my startup, Tachos High Performance Computing. It’s time to talk about what we’re all about. I and my cofounders are now in the business of selling IBM Power for HPC applications. We’re working directly with IBM to deliver an offering to the HPC market that will have some unique values unmatched by anything else.

If you’ve been in HPC as long as I have, you have the same question I had when I was approached by my two co-founders.

Why should HPC pay attention to IBM Power?

The reality is it’s been a long time since IBM was a major player in HPC. They made a strategic decision a few years ago to focus on databases, and frankly, if I was going to write about IBM’s database technology, I’d sound like St Paul after his Damascus experience. If you’re running SAP, Oracle, or SQL, you need to stop what you’re doing right now, this second, and switch to Power. When it comes to databases, everyone else is building nice sedans while IBM is building F1 race cars. But listen, I’m not a database guy, I’m a CFD guy. Or maybe I’m more an HPC guy now.

Every chip has design focuses. With IBM Power, it’s data throughput. The cores are designed to enable the memory architecture, which is in turn to maximize bandwidth. Currently, Power10’s unique architecture enables it to have 16 channels of DDR4 on the socket, delivering 400 GB/s of bandwidth.

Memory channels alone aren’t enough. The Power SMT-8 core is designed first and foremost to maximize that memory throughput, i.e. it is consciously designed to be able to consume data as fast as the main memory can deliver it, something that is easier said than done. Realized bandwidth is usually 20%-30% below the theoretical value.

Now, the theoretical bandwidth of a CPU is a simple formula:

GB/s = speed x channels 8 / 1024

So if I have 12 channels of DDR5-4800 on my Zen 4 EPYC CPU, I have, in theory:

4800 x 12 x 8 / 1024 = 450 GB/s

8 channels of DDR4-3200 in Zen EPYC and Ice Lake Xeon give, in theory:

3200 x 8 x 8 / 1024 = 200 GB/s

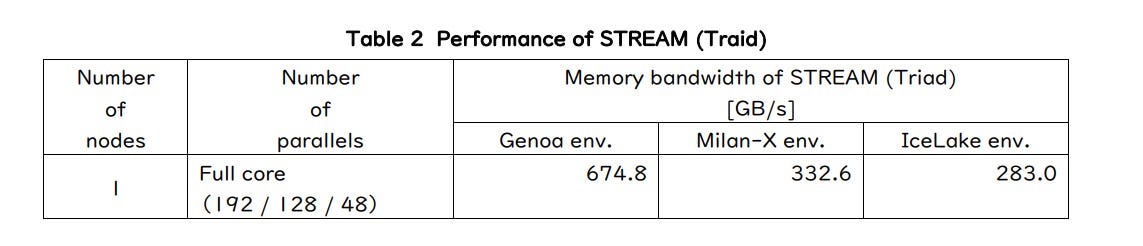

We all know that computers are faster in theory than reality, so it should come as no surprise that in Stream Triad benchmarks, Zen 4 EPYC doesn’t quite deliver that bandwidth. This benchmark by Global HPC has each Zen 4 CPU delivering about 337 GB/s in Stream Triad (75% efficient), while the Zen 3s serve up 166 GB/s (83% efficient), and Ice Lake is trailing at 141 GB/s (70% efficient).

Power10’s OMI memory fabric can sustain 1.4 TB/s of bandwidth. The catch is the memory controller is on the DIMM, not the CPU, so it’s ultimately got as much bandwidth as the memory product can deliver. Currently, Power10 has 16 channels of DDR4-3200 per socket:

3200 x 16 x 8 / 1024 = 400 GB/s

IBM’s benchmark for this chip is, by doing some sleuthing, around 360 GB/s (they say 2.75x faster than Power9, which comes in at 132 GB/s). That’s even faster than Zen 4 EPYC.

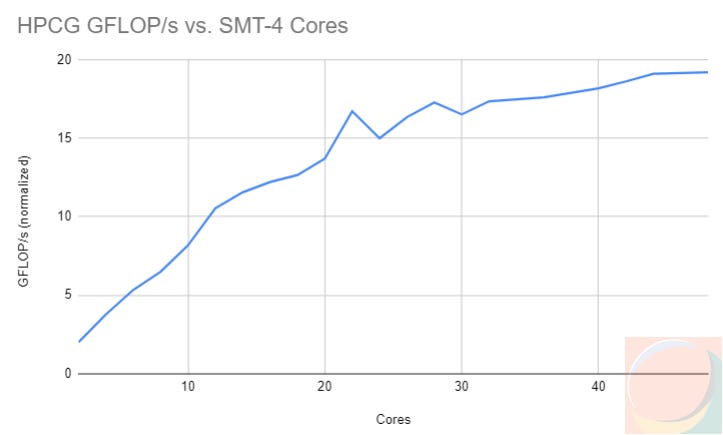

In HPCG, a benchmark that is affected by both L3 cache and memory bandwidth, a 48-core Power10 CPU can sustain 30 GFLOP/s, compared to a 128-core EPYC 9754 sustaining 23 GFLOP/s. And guess what…that Power10 CPU still has a lot more floating-point muscle left to unlock. The CPU started getting bandwidth-choked right around 24 cores and looks to have another O(1.7x) speedup available when IBM releases its next-gen memory technology.

In other words, when it comes to memory throughput, x86 isn’t playing the same game as Power.

On top of all that bandwidth, the chip, is a floating-point beast. With four floating-point units per SMT-4 core, it’s capable of doing a lot of math. With a 48-core P10 DCM, even that 400 GB/s of bandwidth isn’t enough, as we can see from my HPCG test:

So if you’ve got really efficient, vectorized code, the 24-core P10 SCM should be plenty for you. But here’s the crazy thing - since the memory controllers are on the DIMMs, not the CPU, that means when IBM launches its next generation of memory technology, P10s can have even more performance unlocked.

But wait, there’s more.

I’m not going to stop talking about CFD and general HPC. Once we get our new website done, most Tachos-related stuff will be on our company blog. However, there’s a lot more to say about IBM products and why they’re great for HPC. IBM’s taken a little break from HPC for a variety of reasons, but they’ve got some incredible technology cooking up, and I’m pretty excited to share it when I can. At Tachos, we’re working on a really special cloud offering.

Speaking of that, if you’re interested in HPC cloud with no egress charges, please email me at info@tachos-hpc.com or shoot me a message on LinkedIn.

Great observations on our Power10 chips and servers and the "power" under the hood!